XML formatted Configuration Management (CM) data files published by 2G, 3G, 4G, and 5G mobile networks via the Northbound Interface (NBI) of the OSS are very common. The corresponding standards and their specifications are developed by 3GPP.

Processing these XML files can be a hassle due to the size of these files: tens of gigabytes are common for nationwide configuration files. When such files are processed, servers may be blocked for more than an hour.

Just a big list of network elements

Basically XML files containing network-related CM data (also named topology files) are a big list of all network elements and their configuration. A topology file typically looks something like this:

...

<fileHeader ... />

<configData ...>

<element name=ElementName1>

element1_config

</element>

<element name=ElementName2>

element2_config

</element>

...

<element name=ElementNameN>

elementN_config

</element>

</configData>

<fileFooter ... />

...XML File Size

Topology files vary in size, of course depending on the number of network elements. File sizes range from several hundreds of megabytes for small networks up to tens of gigabytes (for networks with millions of elements).

DOM and event streaming parsing

In general, there are two ways of parsing XML;

- Document Object Model or DOM

- Event streaming parsing

DOM loads the complete XML file into memory where a full tree representation of the data is created. The complete hierarchy is available in memory during the processing of the data.

Event streaming parsing is event-based; XML files are read serially (no complete tree structure is stored in memory) and events like XMLEvent::StartElement and XMLEvent::EndElement are to be dealt with during processing.

Obviously, in order to prevent blocking servers due to using excessive amounts of memory, event streaming should be applied when parsing huge XML files.

Divide and conquer

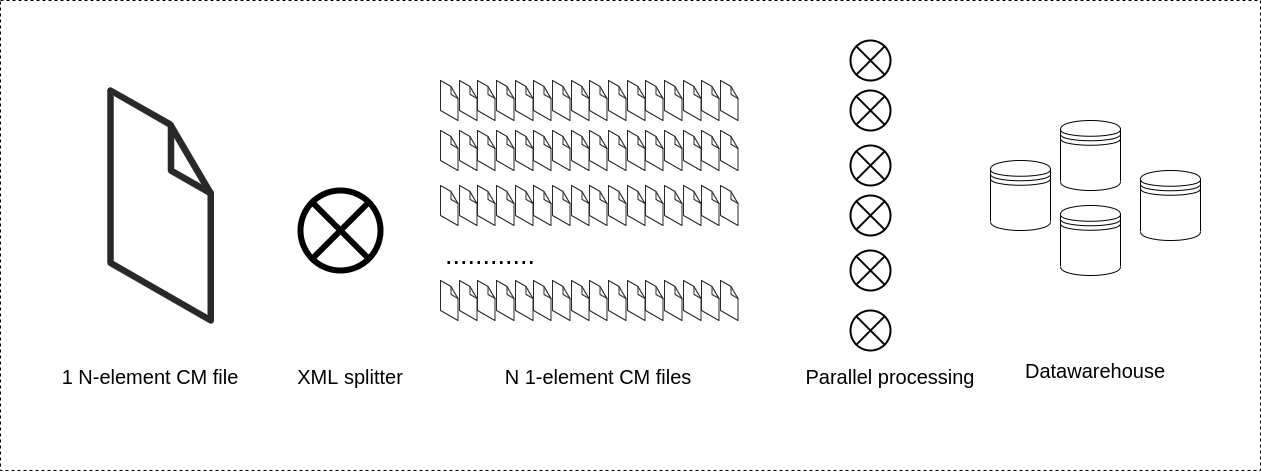

A large XML formatted topology file is in essence a big list of configurations of network elements. In order to parse the XML formatted topology file with good performance and not block servers, the file can be split into smaller files.

A run with a streaming parser splitting a large XML file containing the configuration of N elements into N small files containing the configuration of 1 element is not that resource-demanding and has minimal impact on overall system performance.

The output can be processed in a controlled and scheduled way and even in parallel, using a cluster of nodes. This way blocking of servers is prevented and processing can be done much faster.

Another benefit of splitting a large file into smaller ones is that in case of an element reporting back incorrect formatted data, the other configurations can still be processed. With this approach, the XML processing is less error-prone.

Implementation

Most programming languages support DOM and event streaming parsing of XML out of the box or have a de facto standard library.

For most object-oriented languages like Python, C++, and Java, there is a SAX (Simple API for XML) library. This is a standardized event-driven API, for streaming parsing.

There are event-based XML parsing libraries available for non-object-oriented modern languages like Rust and Haskell.

See an example here of an XML splitter written in Rust.

Contact us

Feel free to contact us in case you want to know more about processing large XML files or the Rust example. We are processing these types of data on an everyday basis.

Also, don’t hesitate to contact us for getting more information about 1OPTIC, our high-performance, multi-vendor, cross-domain data processing solution for, amongst others, processing large XML files containing configuration data.